Now - many of you know that my roots are not in virtualization, but on the network side, and I refused to leave it at that. To sum up that story, the customer was indeed using port channels from a Cisco 3750 stack, and after reading a fairly helpful KB article from VMware ( ESXi Host Requirements for Link Aggregation), I had the documentation I needed to confirm with the customer that the appropriate policy for this topology was “route based on IP hash”. Now, with respect to the problem I was experiencing in the first section I had seen this behavior before, and knew that in the network topologies I’d worked with (etherchannel to the hosts in at least some way), the first policy where traffic is routed based on the originating virtual port ID would produce problems. Should that pNIC fail, the next will be used. Suffice it to say it’s really not load balancing at all, it’s more of a hardcore deterministic method of placing traffic on the pNIC that you want. Use explicit failover order - not used very often.

#Nic teaming vmware esxi 6 mac

Route based on source MAC hash - this is another hash-based selection mechanism, but since it’s only source based, and will correspond to an actual vNIC, this produces much the same behavior as the very first policy. Traffic from the same IP address within the host to the same IP address outside the host will always leave on the same pNIC, but other traffic flows may fall on another NIC, even if the virtual source is the same. Route based on IP Hash - this is nothing new to anyone familiar with 802.3ad the idea is that a given packet has a hash made of it’s source and destination IP addresses, and the resulting math will determine which link is used to egress from the host. Traffic leaving this vNIC destined outside the host will always leave on that pre-determined pNIC, unless that pNIC were to fail. Route based on the originating virtual port ID - this selects an uplink when a virtual device (virtual machine or vmkernel) attaches to the vSwitch. I’ll assume you’ve at least read those two links by now, so as a quick summary, the available load-balancing policies on a vSwitch are: If you prefer a more personal approach, this article by Ken Cline is very popular with respect to this topic.

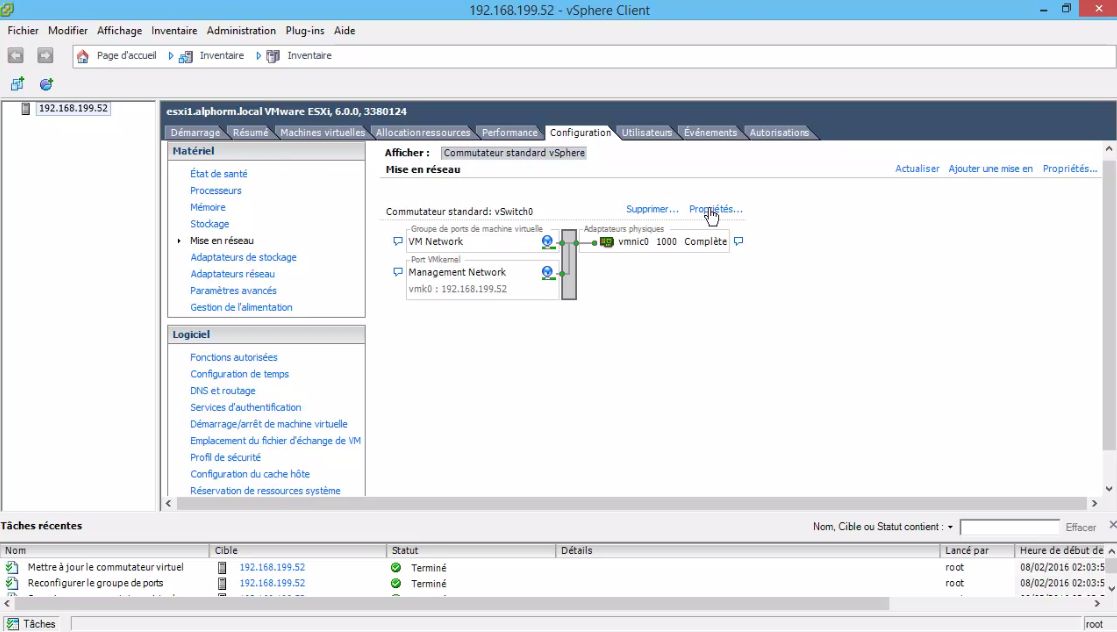

If you prefer to hear it straight from the horse’s mouth, try VMware’s Virtual Networking Concepts whitepaper. The myriad of blog posts concerning vSphere Standard vSwitch redundancy typically will show a topology like this:Īs mentioned before, you’ll have no trouble finding posts that explain the basics of how each vSwitch NIC Teaming policy works. I SSH’d into each of the hosts and discovered that they could not ping each other over the management network, and they definitely should have been able to. It seemed like vCenter was able to get to the hosts, but the client kept refreshing like it was losing connectivity several times a second. This state is reported by a vSphere HA master agent that is in a partition other than the one containing the host.ĭuncan Epping has a great article on Host Isolation, specifically with regards to this error message as well. VSphere has detected that this host is in a different network partition that the master to which vcenter server is connected, or the Vsphere HA Agent on the host is alive and has management network connectivity but the management network has been partitioned. It was pretty bad - the vSphere client was laggy, vCenter’s resource utilization was pretty high, and I was getting strange messages like: A customer was having problems getting vSphere HA to converge properly, and was also having intermittent connectivity between vCenter and the ESXi hosts. Since this post was inspired by an experience of mine, I will briefly explain the problem symptoms that surfaced as a result of incorrect settings that will be explored later in the post. So…this article will be catered towards a very specific problem.

It offers minimal complexity, while also providing the best load-balancing capabilities for network devices utilizing a vSwitch (Virtual Machine OR vmkernel). There are a million articles out there on ESXi vSwitch Load Balancing, many of which correctly point out that the option for routing traffic based on IP Hash is probably the best option, if your upstream switch is running 802.3ad link aggregation to the ESXi hosts.